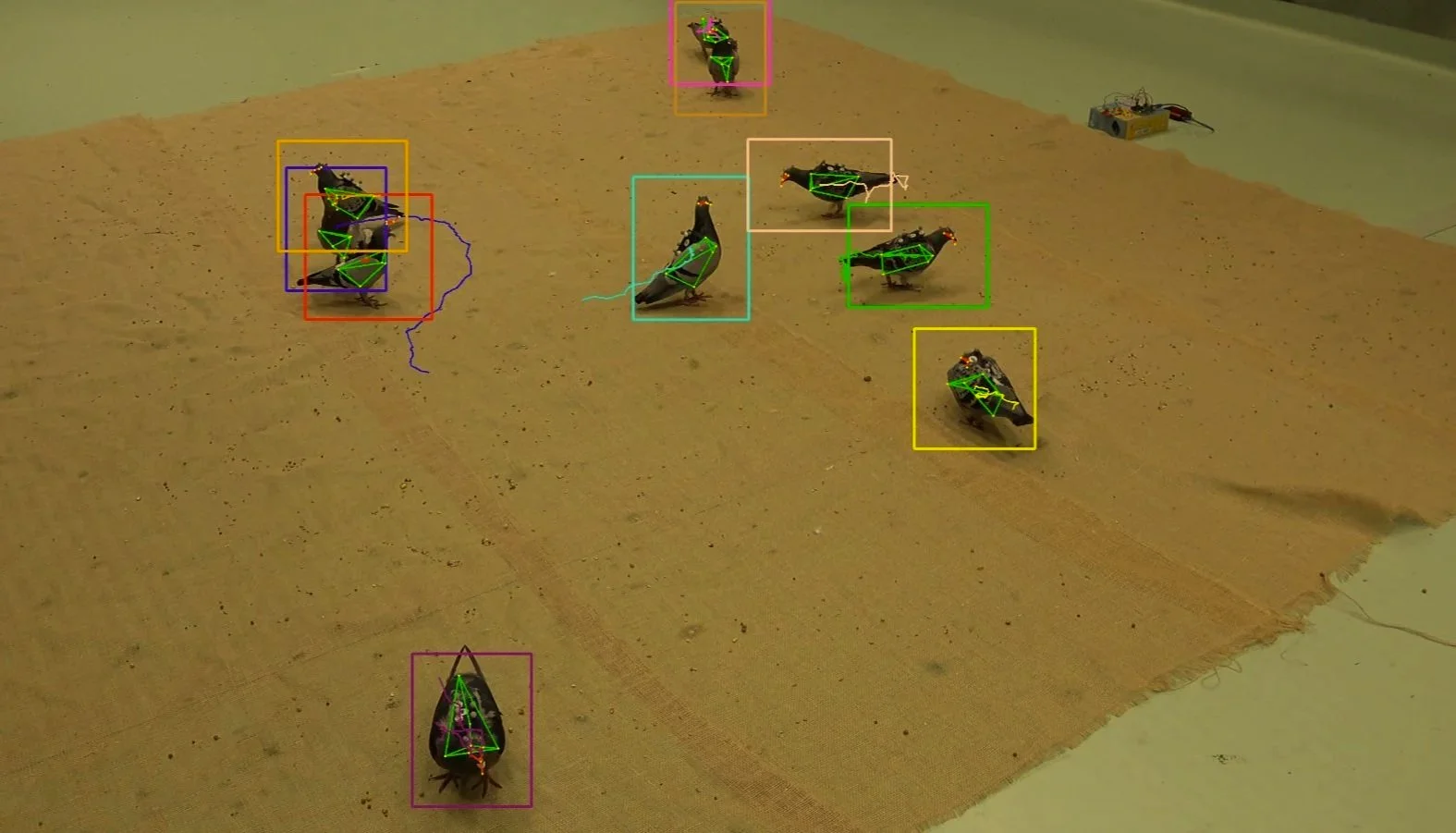

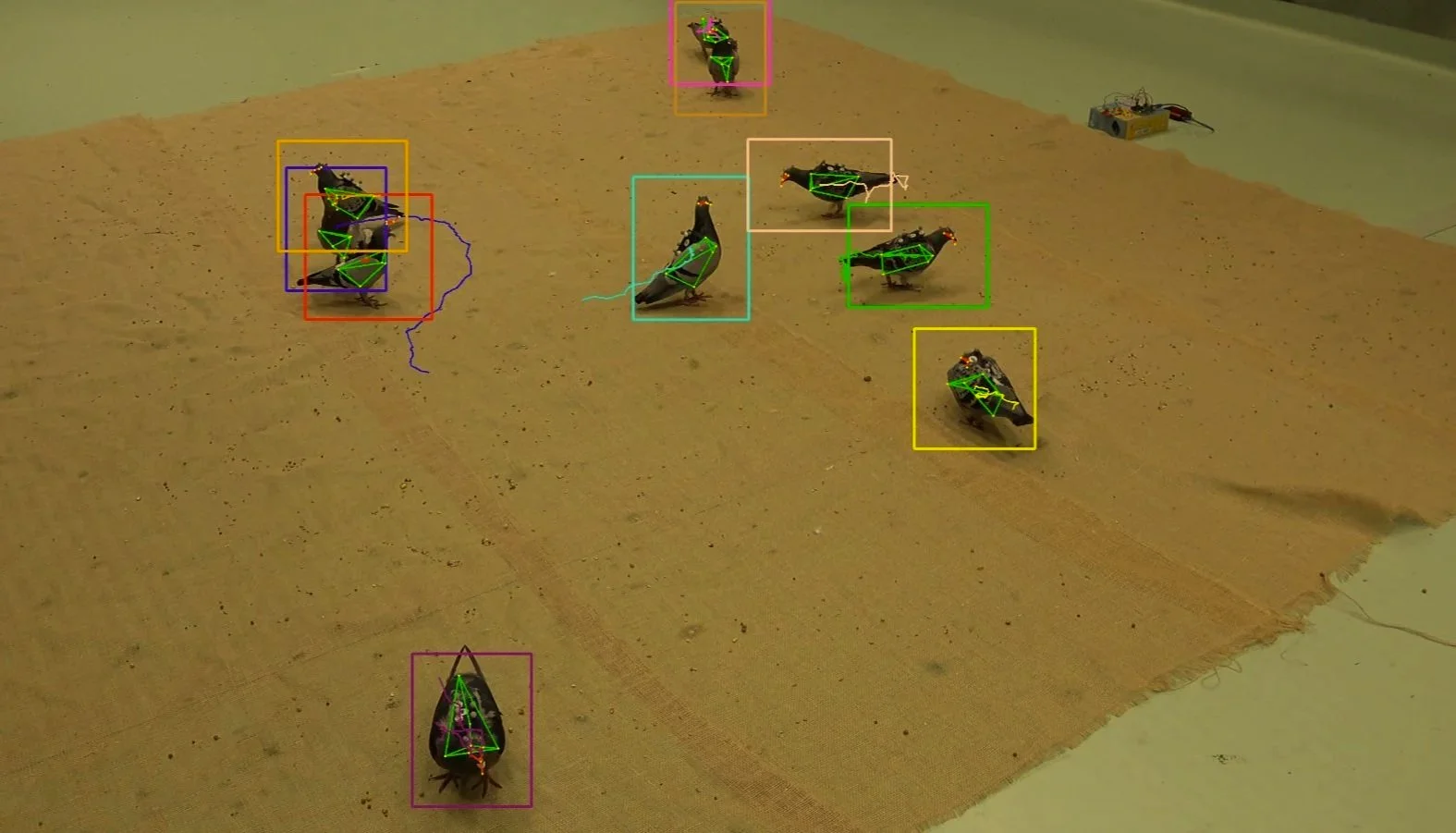

My research on sensory ecology focuses on studying the mechanisms behind complex behavior patterns of animals by measuring their movement and posture. I use compute vision & AI to automatically measure the subtle differences in behavioral patterns of individuals in a group by tracking their interactions in the three dimensions. 3D tracking and individual identification offers a remarkable window to measure complex attributes such as gaze or visual field of view of an animal. Since 2018, I have developed several fundamental datasets, tracking methods and infrastructures with birds as my primary system and now I am working with wild birds and primates on topics such as social learning and tool use. I also take interest in Virtual Reality systems of animals, which directly involve study of behavioral mechanisms by triggering the visuo-motor response using artificial visual stimulation.